Research

At HDMI Lab, we pursue AI that is not only technically powerful but also aligned with human values, grounded in high-quality data, and capable of autonomous action in complex real-world environments. Our research spans the full pipeline — from understanding human cognition and behavior, to building intelligent systems that learn from trustworthy data, to deploying autonomous agents in industrial settings. By bridging human-centered design, data science, and agentic systems, we aim to develop AI that people can trust, understand, and collaborate with.

Human-Centered AI

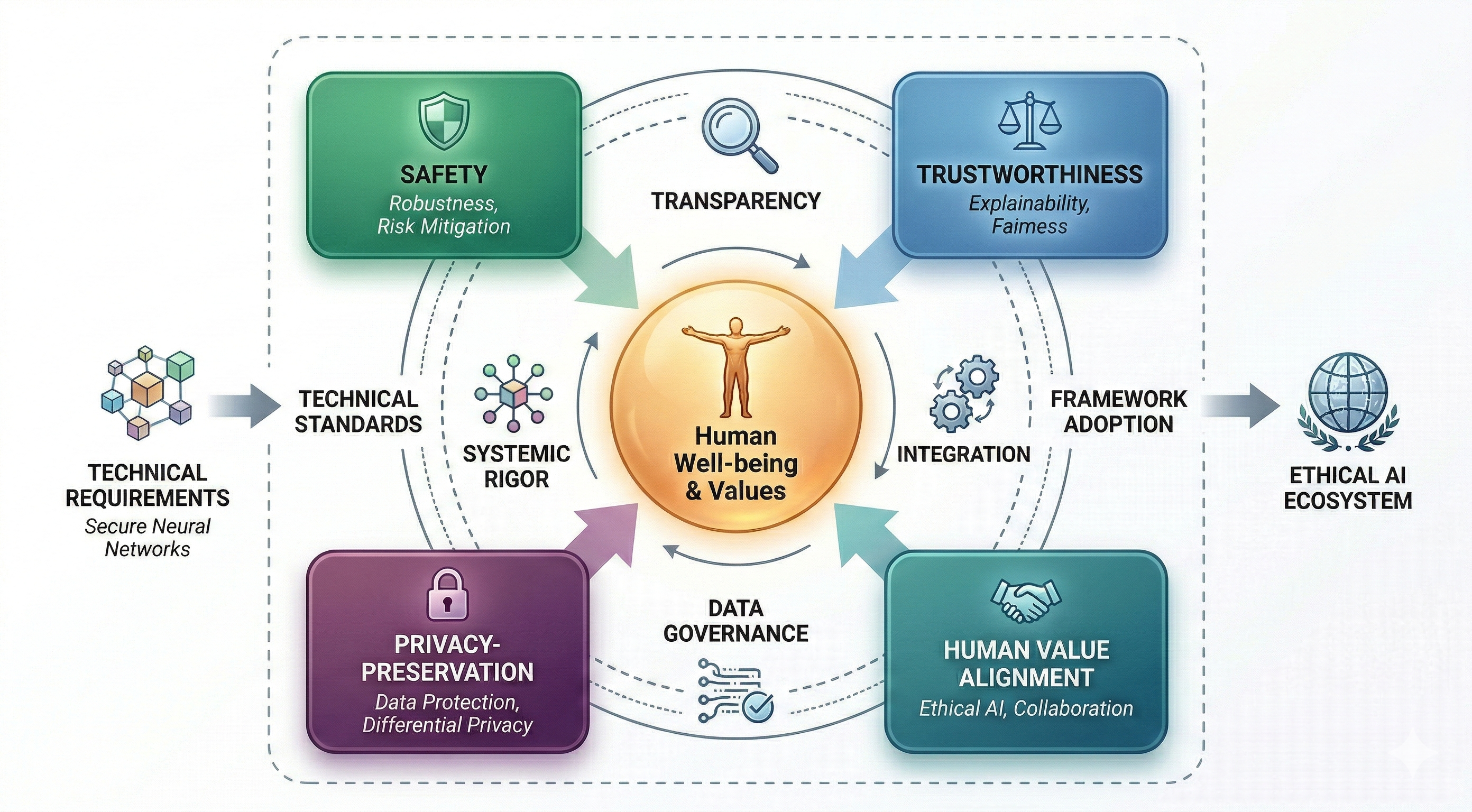

We develop AI systems that are trustworthy, safe, and aligned with human values. Our work focuses on ensuring that AI decisions are transparent, fair, and explainable, so that humans remain at the center of the decision-making process. Key research topics include:

- Explainable AI (XAI): Designing interpretable models and post-hoc explanation methods that help users understand and trust AI decisions.

- Trustworthy AI: Investigating human trust calibration in automation, detecting hallucinations in large language models, and developing methods for AI safety and reliability.

- Privacy-Preserving AI: Building federated learning frameworks and differential privacy techniques that protect sensitive user data while maintaining model performance.

- Brain-Computer Interfaces (BCI): Leveraging EEG signals for emotion recognition, cognitive state monitoring, and neural decoding to bridge human cognition and intelligent systems.

- Machine Psychology: Probing the cognitive and behavioral patterns of large language models through the lens of psychological frameworks, to better understand how AI systems reason, exhibit biases, and interact with humans.

Data-Centric Intelligence

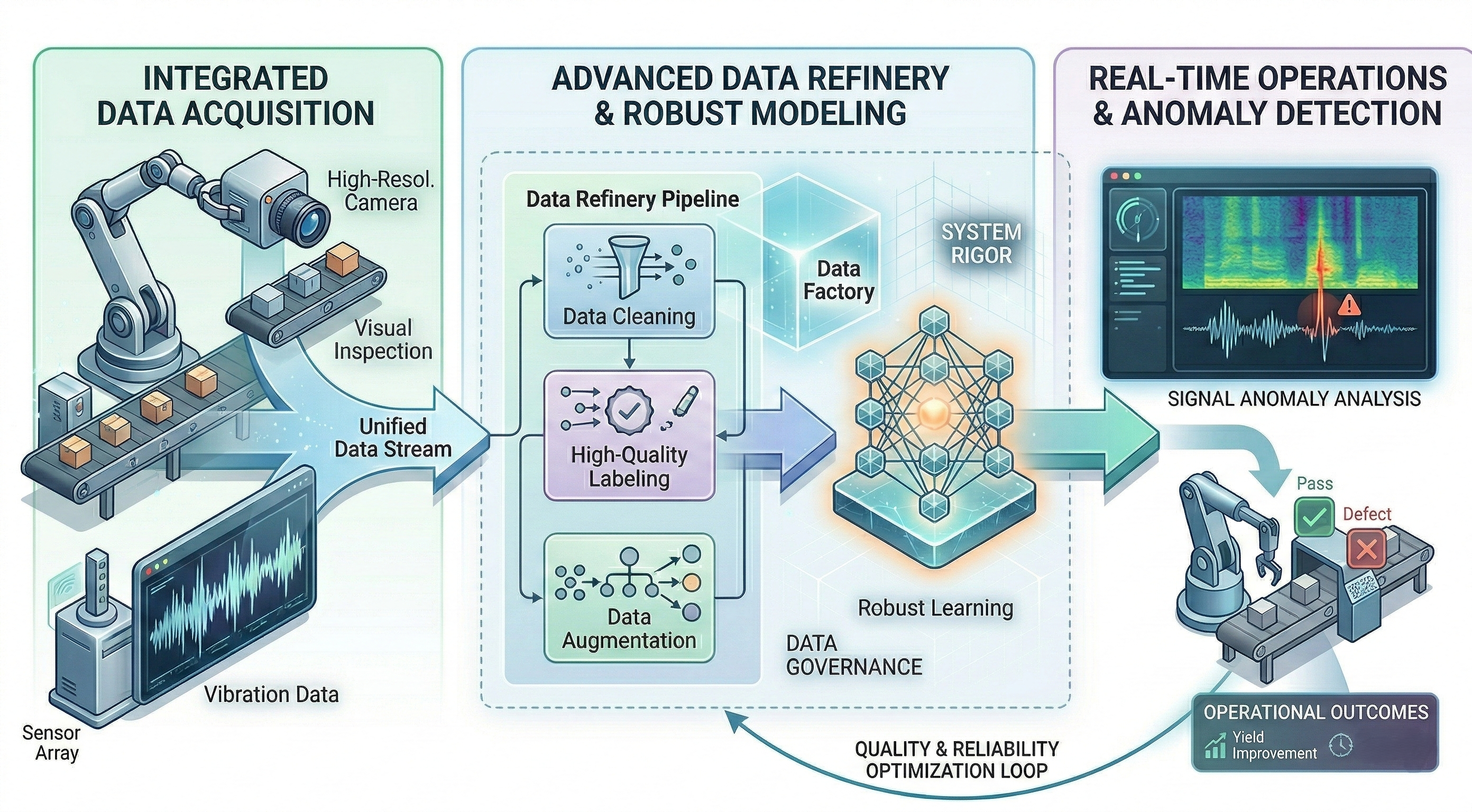

We believe that the quality of data is just as important as the sophistication of models. Our research focuses on systematically improving datasets and developing efficient learning paradigms to build more robust AI systems. Key research topics include:

- Data Quality & Curation: Developing systematic approaches to assess, clean, and curate datasets, ensuring that models are trained on reliable and representative data.

- Industrial AI & Fault Detection: Applying data-driven AI to real-world manufacturing challenges, including speaker defect analysis, quality control, and process optimization.

- Knowledge Distillation: Compressing large models into lightweight, deployable versions without sacrificing performance, enabling edge and on-device deployment.

- Multi-Task & Transfer Learning: Building models that learn shared representations across related tasks to improve generalization and data efficiency.

Agentic AI

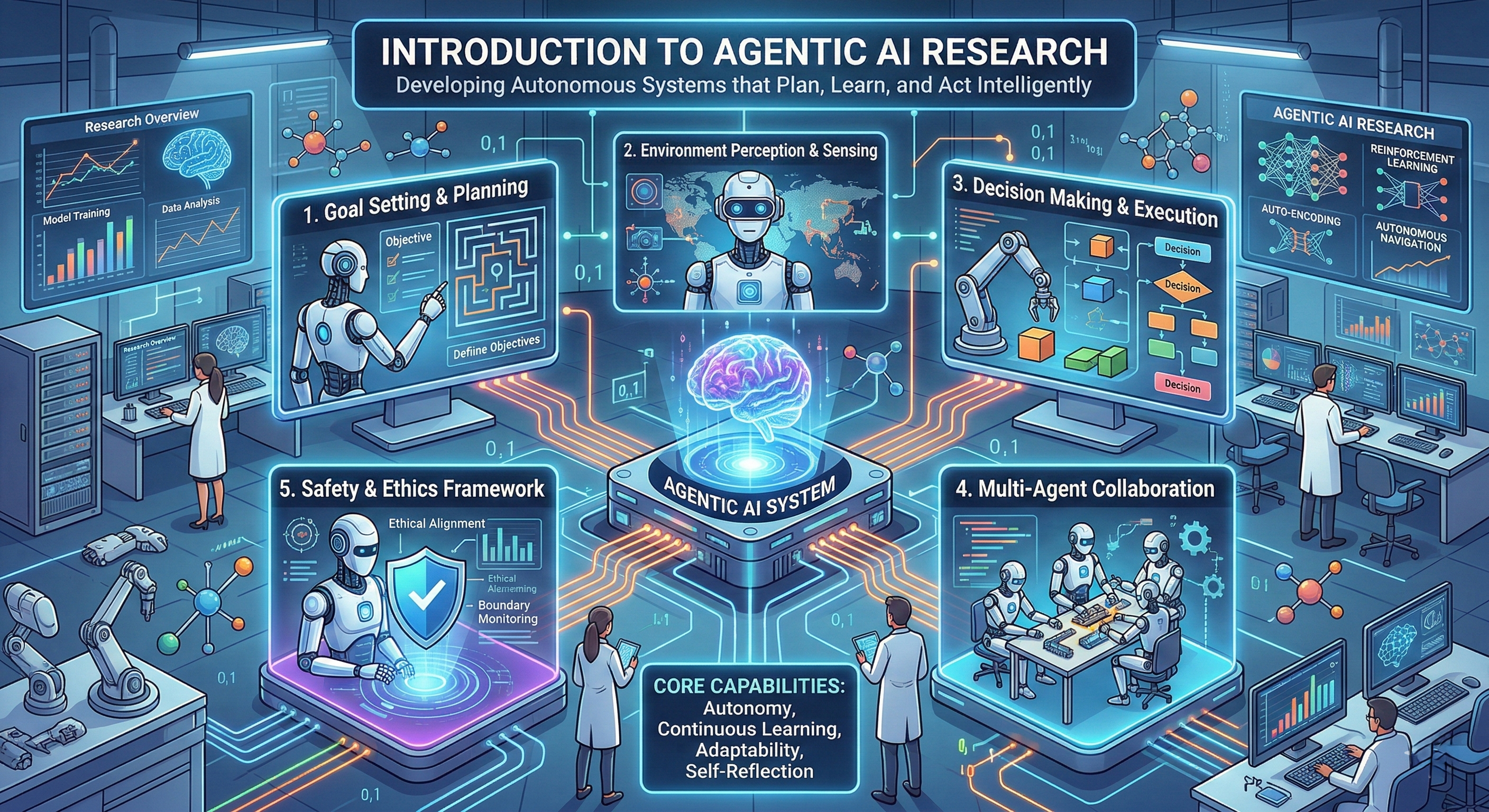

We build autonomous AI agents that can reason, plan, and take action in complex real-world environments. Moving beyond passive prediction, our research aims to create AI that actively interacts with its environment and collaborates with humans to accomplish goals. Key research topics include:

- LLM-Based Agents: Designing autonomous agents powered by large language models that can perform multi-step reasoning, tool use, and decision-making in open-ended environments.

- Reinforcement Learning from Human Feedback (RLHF): Aligning AI behavior with human preferences and values through reward modeling and feedback-driven optimization.

- Subliminal Learning: Exploring implicit and latent learning mechanisms that enable AI systems to acquire knowledge from subtle, indirect, or subconscious-level signals beyond explicit supervision.

- Human-Agent Collaboration: Investigating how AI agents and humans can work together effectively, ensuring that agents are aligned with human intent and can be steered by human oversight.